!wget -nc --no-cache -O init.py -q https://raw.githubusercontent.com/rramosp/2021.deeplearning/main/content/init.py

import init; init.init(force_download=False);/content/init.py:2: SyntaxWarning: invalid escape sequence '\S'

course_id = '\S*deeplearning\S*'

replicating local resources

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

import pandas as pd

%matplotlib inline

%load_ext tensorboard

from sklearn.datasets import *

from local.lib import mlutils

from IPython.display import Image

from skimage import io

tf.__version__Object detection¶

Approaches:¶

Classical: Sliding window, costly

Two Stage Detectors: First obtain proposed regions, then classify.

One Stage Detectors: Use region priors on fixed image grid

Observe how an image is annotated for detection¶

This is an example from the Open Images V6 Dataset, a dataset created and curated at Google. Explore and inspect images and annotations to understand the dataset.

Particularly:

get a view on the volumetry and class descriptions in https://

storage .googleapis .com /openimages /web /factsfigures .html understand the image annotation formats in https://

storage .googleapis .com /openimages /web /download .html

We download the class descriptions

!wget -nc https://storage.googleapis.com/openimages/v5/class-descriptions-boxable.csv

c = pd.read_csv("class-descriptions-boxable.csv", names=["code", "description"], index_col="code")

c.head()--2025-09-03 23:13:40-- https://storage.googleapis.com/openimages/v5/class-descriptions-boxable.csv

Resolving storage.googleapis.com (storage.googleapis.com)... 192.178.163.207, 173.194.202.207, 173.194.203.207, ...

Connecting to storage.googleapis.com (storage.googleapis.com)|192.178.163.207|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 12011 (12K) [text/csv]

Saving to: ‘class-descriptions-boxable.csv’

class-descriptions- 100%[===================>] 11.73K --.-KB/s in 0s

2025-09-03 23:13:40 (69.3 MB/s) - ‘class-descriptions-boxable.csv’ saved [12011/12011]

An example image

img = io.imread("local/imgs/0003bb040a62c86f.jpg")

plt.figure(figsize=(12,10))

plt.imshow(img)

with its annotations

boxes = pd.read_csv("local/data/openimages_boxes_0003bb040a62c86f.csv")

boxesthe annotations of this image

pd.Series([c.loc[i].description for i in boxes.LabelName]).value_counts()

from matplotlib.patches import Rectangle

i = np.random.randint(len(boxes))

plt.figure(figsize=(12,10));

ax = plt.subplot(111)

plt.imshow(img)

h,w = img.shape[:2]

for i in range(len(boxes)):

k = boxes.iloc[i]

label = c.loc[k.LabelName].values[0]

ax.add_patch(Rectangle((k.XMin*w,k.YMin*h),(k.XMax-k.XMin)*w,(k.YMax-k.YMin)*h, linewidth=2,edgecolor='r',facecolor='none'))

plt.text(k.XMin*w, k.YMin*h-10, label, fontsize=12, color="red")

Patch classification, with InceptionV3 from Keras¶

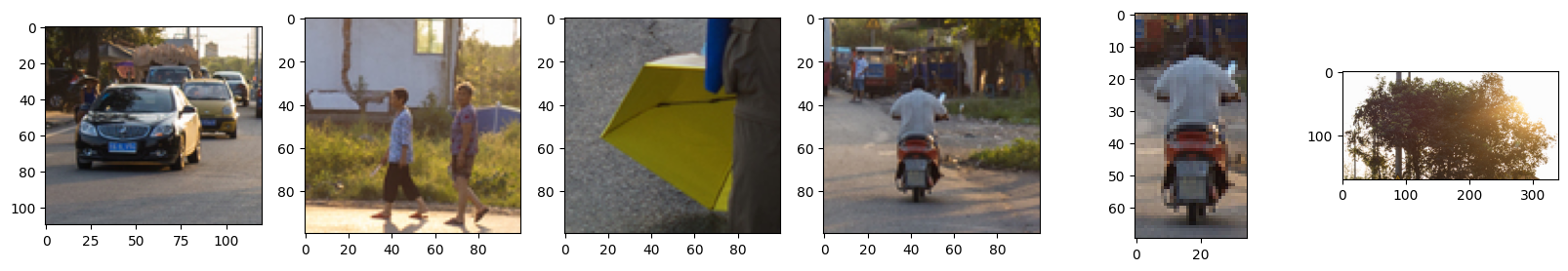

some sample patches

patches = [img[190:300, 150:270],

img[200:300, 600:700],

img[400:500, 400:500],

img[200:300, 300:400],

img[220:290, 325:360],

img[10:180, 330:670]]

plt.figure(figsize=(20,3))

for i,pimg in enumerate(patches):

plt.subplot(1,len(patches),i+1); plt.imshow(pimg)

from tensorflow.keras.applications import inception_v3

if not "model" in locals():

model = inception_v3.InceptionV3(weights='imagenet', include_top=True)

Downloading data from https://storage.googleapis.com/tensorflow/keras-applications/inception_v3/inception_v3_weights_tf_dim_ordering_tf_kernels.h5

96112376/96112376 ━━━━━━━━━━━━━━━━━━━━ 1s 0us/step

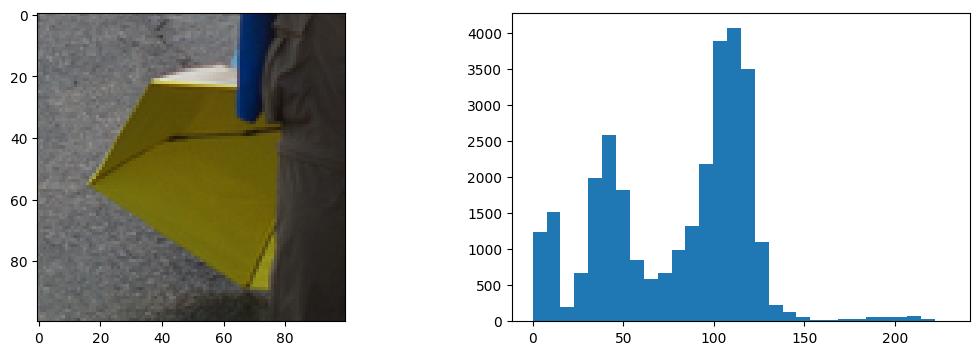

def plot_img_with_histogram(img):

plt.figure(figsize=(13,4))

plt.subplot(121)

plt.imshow(img, vmin=np.min(img), vmax=np.max(img))

plt.subplot(122)

plt.hist(img.flatten(), bins=30);

pimg = patches[2]

plot_img_with_histogram(pimg)

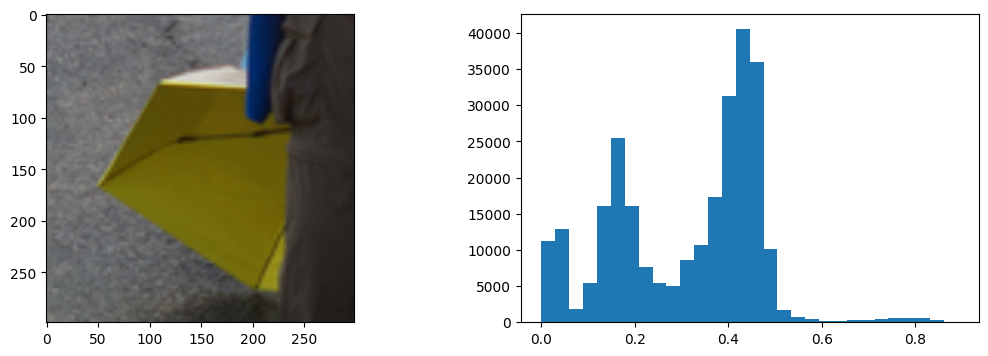

from skimage.transform import resize

rimg = resize(pimg, output_shape=(299,299,3))

plot_img_with_histogram(rimg)

pred = model.predict(rimg.reshape(-1,*rimg.shape))

pred.shape1/1 ━━━━━━━━━━━━━━━━━━━━ 3s 3s/step

(1, 1000)k = pd.DataFrame(inception_v3.decode_predictions(pred, top=100)[0], columns=["code", "label", "preds"])

k = k.sort_values(by="preds", ascending=False)

Downloading data from https://storage.googleapis.com/download.tensorflow.org/data/imagenet_class_index.json

35363/35363 ━━━━━━━━━━━━━━━━━━━━ 0s 0us/step

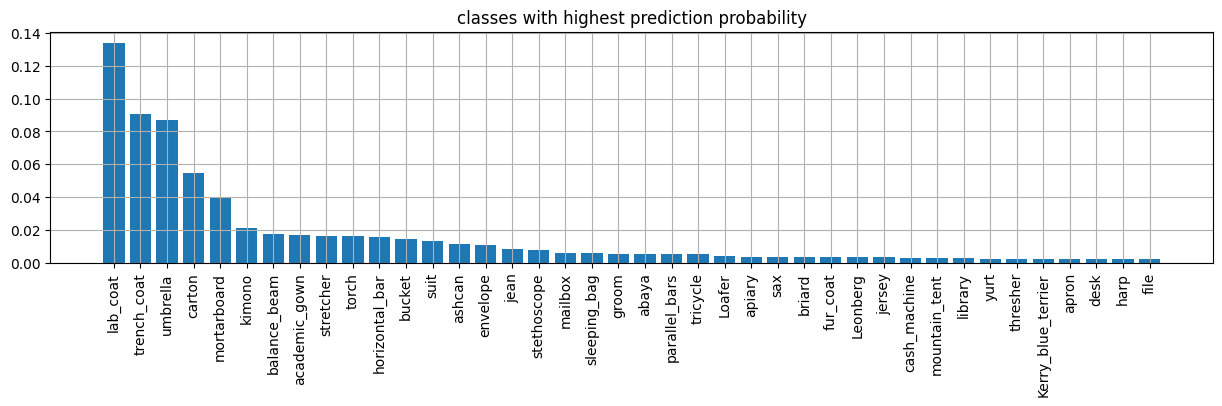

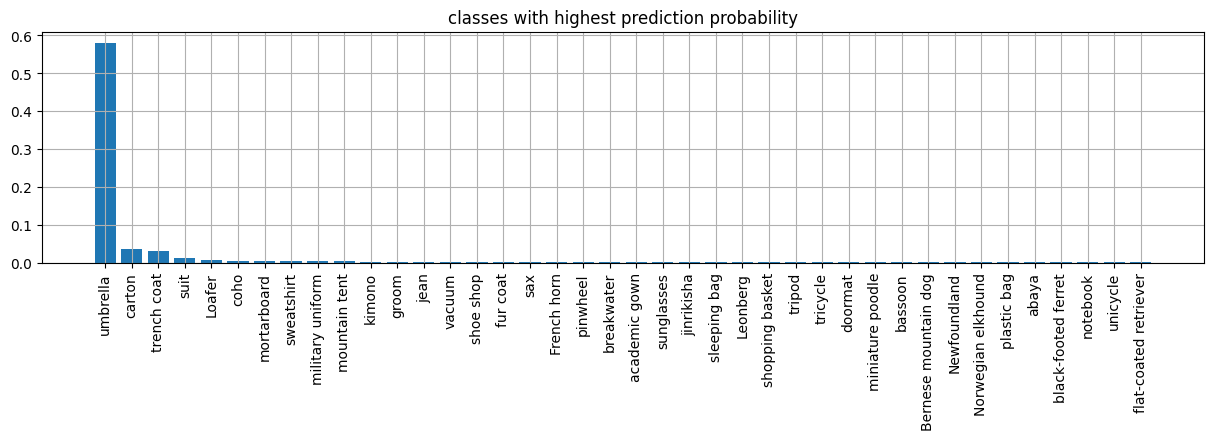

plt.figure(figsize=(15,3))

n = 40

plt.bar(range(n), k[:n].preds.values)

plt.xticks(range(n), k[:n].label.values, rotation="vertical");

plt.title("classes with highest prediction probability")

plt.grid();

observe how we decode prediction classes. We would nee to align them with our detectiond dataset.

print ('Predicted:')

k = inception_v3.decode_predictions(pred, top=10)[0]

for i in k:

print("%10s %20s %.6f"%i)Predicted:

n03630383 lab_coat 0.134186

n04479046 trench_coat 0.090892

n04507155 umbrella 0.087079

n02971356 carton 0.054701

n03787032 mortarboard 0.039948

n03617480 kimono 0.021387

n02777292 balance_beam 0.017429

n02669723 academic_gown 0.016952

n04336792 stretcher 0.016528

n04456115 torch 0.016192

Patch classification, with ResNet model published on TensorFlow Hub¶

import tensorflow_hub as hubclassnames = pd.read_csv('https://storage.googleapis.com/download.tensorflow.org/data/ImageNetLabels.txt', names=["label"])

if not 'm' in locals():

m = hub.KerasLayer("https://tfhub.dev/google/imagenet/inception_resnet_v2/classification/4")

[w.shape for w in m.weights][TensorShape([1, 1, 1088, 192]),

TensorShape([3, 1, 224, 256]),

TensorShape([3, 3, 3, 32]),

TensorShape([32]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 3, 32, 48]),

TensorShape([384]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 448, 2080]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1, 1, 384, 1088]),

TensorShape([224]),

TensorShape([2080]),

TensorShape([192]),

TensorShape([1, 1, 128, 320]),

TensorShape([3, 3, 32, 32]),

TensorShape([320]),

TensorShape([32]),

TensorShape([1088]),

TensorShape([1, 1, 1088, 128]),

TensorShape([128]),

TensorShape([224]),

TensorShape([192]),

TensorShape([192]),

TensorShape([3, 3, 48, 64]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 7, 128, 160]),

TensorShape([7, 1, 160, 192]),

TensorShape([192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 128, 320]),

TensorShape([32]),

TensorShape([320]),

TensorShape([128]),

TensorShape([192]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 384, 1088]),

TensorShape([64]),

TensorShape([3, 3, 32, 32]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([3, 3, 288, 320]),

TensorShape([2080]),

TensorShape([192]),

TensorShape([3, 3, 32, 32]),

TensorShape([3, 3, 32, 48]),

TensorShape([160]),

TensorShape([1, 1, 1088, 128]),

TensorShape([192]),

TensorShape([1088]),

TensorShape([3, 3, 32, 48]),

TensorShape([192]),

TensorShape([1, 7, 128, 160]),

TensorShape([1, 1, 2080, 192]),

TensorShape([2080]),

TensorShape([128]),

TensorShape([3, 3, 32, 32]),

TensorShape([32]),

TensorShape([3, 3, 48, 64]),

TensorShape([160]),

TensorShape([1, 1, 384, 1088]),

TensorShape([320]),

TensorShape([7, 1, 160, 192]),

TensorShape([256]),

TensorShape([224]),

TensorShape([1, 1, 2080, 192]),

TensorShape([192]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 1088, 128]),

TensorShape([192]),

TensorShape([1, 3, 192, 224]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1, 1, 320, 32]),

TensorShape([32]),

TensorShape([1, 1, 128, 320]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 1, 384, 1088]),

TensorShape([192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 7, 128, 160]),

TensorShape([256]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([1088]),

TensorShape([32]),

TensorShape([48]),

TensorShape([64]),

TensorShape([3, 3, 48, 64]),

TensorShape([160]),

TensorShape([3, 3, 32, 48]),

TensorShape([1, 3, 192, 224]),

TensorShape([160]),

TensorShape([128]),

TensorShape([1, 1, 320, 32]),

TensorShape([320]),

TensorShape([1088]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1088]),

TensorShape([1, 1, 448, 2080]),

TensorShape([1001]),

TensorShape([192]),

TensorShape([1, 1, 64, 80]),

TensorShape([64]),

TensorShape([32]),

TensorShape([48]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([48]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 1, 224, 256]),

TensorShape([224]),

TensorShape([1, 1, 1088, 128]),

TensorShape([3, 3, 32, 48]),

TensorShape([3, 3, 64, 96]),

TensorShape([32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 7, 128, 160]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1, 1, 1088, 128]),

TensorShape([160]),

TensorShape([192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([224]),

TensorShape([3, 3, 48, 64]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([160]),

TensorShape([1, 7, 128, 160]),

TensorShape([1, 1, 384, 1088]),

TensorShape([256]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 1088, 192]),

TensorShape([3, 3, 256, 288]),

TensorShape([1, 3, 192, 224]),

TensorShape([3, 3, 32, 48]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([256]),

TensorShape([1, 1, 2080, 192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([48]),

TensorShape([1, 7, 128, 160]),

TensorShape([288]),

TensorShape([320]),

TensorShape([320]),

TensorShape([160]),

TensorShape([224]),

TensorShape([1, 1, 2080, 192]),

TensorShape([3, 3, 80, 192]),

TensorShape([1, 7, 128, 160]),

TensorShape([1, 1, 384, 1088]),

TensorShape([192]),

TensorShape([3, 1, 224, 256]),

TensorShape([64]),

TensorShape([192]),

TensorShape([32]),

TensorShape([160]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1536, 1001]),

TensorShape([1, 1, 448, 2080]),

TensorShape([192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1, 1, 384, 1088]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 7, 128, 160]),

TensorShape([128]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 448, 2080]),

TensorShape([2080]),

TensorShape([1, 1, 2080, 192]),

TensorShape([128]),

TensorShape([1088]),

TensorShape([7, 1, 160, 192]),

TensorShape([3, 3, 48, 64]),

TensorShape([128]),

TensorShape([1, 1, 1088, 128]),

TensorShape([7, 1, 160, 192]),

TensorShape([192]),

TensorShape([1, 1, 448, 2080]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1, 1, 1088, 256]),

TensorShape([3, 3, 32, 48]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([128]),

TensorShape([160]),

TensorShape([1, 1, 448, 2080]),

TensorShape([7, 1, 160, 192]),

TensorShape([128]),

TensorShape([192]),

TensorShape([3, 1, 224, 256]),

TensorShape([192]),

TensorShape([256]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 3, 32, 32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([7, 1, 160, 192]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 128, 320]),

TensorShape([1, 1, 192, 96]),

TensorShape([3, 3, 48, 64]),

TensorShape([64]),

TensorShape([1, 1, 128, 320]),

TensorShape([7, 1, 160, 192]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([128]),

TensorShape([224]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([192]),

TensorShape([2080]),

TensorShape([3, 3, 32, 64]),

TensorShape([32]),

TensorShape([3, 3, 32, 32]),

TensorShape([64]),

TensorShape([3, 3, 32, 48]),

TensorShape([1088]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 1088, 256]),

TensorShape([32]),

TensorShape([48]),

TensorShape([192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1088]),

TensorShape([1, 1, 1088, 256]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1088]),

TensorShape([1, 1, 384, 1088]),

TensorShape([192]),

TensorShape([192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 128, 320]),

TensorShape([3, 3, 48, 64]),

TensorShape([192]),

TensorShape([160]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1088]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([256]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([3, 3, 32, 48]),

TensorShape([64]),

TensorShape([384]),

TensorShape([128]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 448, 2080]),

TensorShape([256]),

TensorShape([256]),

TensorShape([1, 1, 2080, 192]),

TensorShape([2080]),

TensorShape([192]),

TensorShape([96]),

TensorShape([32]),

TensorShape([48]),

TensorShape([1, 1, 128, 320]),

TensorShape([3, 3, 32, 32]),

TensorShape([160]),

TensorShape([1, 1, 448, 2080]),

TensorShape([64]),

TensorShape([1, 1, 320, 32]),

TensorShape([32]),

TensorShape([1088]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 384, 1088]),

TensorShape([1088]),

TensorShape([1, 7, 128, 160]),

TensorShape([256]),

TensorShape([3, 1, 224, 256]),

TensorShape([224]),

TensorShape([1, 1, 2080, 192]),

TensorShape([2080]),

TensorShape([1, 1, 448, 2080]),

TensorShape([192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([192]),

TensorShape([48]),

TensorShape([1, 1, 192, 64]),

TensorShape([32]),

TensorShape([160]),

TensorShape([1, 7, 128, 160]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 192, 64]),

TensorShape([1, 1, 128, 320]),

TensorShape([2080]),

TensorShape([1088]),

TensorShape([1, 1, 2080, 192]),

TensorShape([160]),

TensorShape([32]),

TensorShape([64]),

TensorShape([32]),

TensorShape([128]),

TensorShape([1, 1, 1088, 128]),

TensorShape([160]),

TensorShape([1088]),

TensorShape([7, 1, 160, 192]),

TensorShape([3, 1, 224, 256]),

TensorShape([1, 1, 320, 32]),

TensorShape([320]),

TensorShape([1, 1, 320, 32]),

TensorShape([192]),

TensorShape([1, 7, 128, 160]),

TensorShape([160]),

TensorShape([1536]),

TensorShape([160]),

TensorShape([256]),

TensorShape([7, 1, 160, 192]),

TensorShape([32]),

TensorShape([64]),

TensorShape([1, 1, 320, 32]),

TensorShape([32]),

TensorShape([3, 3, 32, 32]),

TensorShape([1, 1, 384, 1088]),

TensorShape([3, 1, 224, 256]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 1088, 192]),

TensorShape([192]),

TensorShape([1, 7, 128, 160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([3, 1, 224, 256]),

TensorShape([3, 1, 224, 256]),

TensorShape([1, 1, 1088, 128]),

TensorShape([3, 3, 48, 64]),

TensorShape([320]),

TensorShape([1, 1, 320, 32]),

TensorShape([32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 384, 1088]),

TensorShape([128]),

TensorShape([1, 1, 2080, 192]),

TensorShape([192]),

TensorShape([1, 1, 320, 32]),

TensorShape([320]),

TensorShape([32]),

TensorShape([1, 7, 128, 160]),

TensorShape([7, 1, 160, 192]),

TensorShape([1, 1, 384, 1088]),

TensorShape([7, 1, 160, 192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([192]),

TensorShape([80]),

TensorShape([32]),

TensorShape([32]),

TensorShape([64]),

TensorShape([1, 1, 128, 320]),

TensorShape([192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([128]),

TensorShape([256]),

TensorShape([3, 3, 256, 288]),

TensorShape([3, 3, 96, 96]),

TensorShape([1, 1, 320, 32]),

TensorShape([64]),

TensorShape([1, 1, 128, 320]),

TensorShape([1088]),

TensorShape([7, 1, 160, 192]),

TensorShape([192]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 3, 256, 384]),

TensorShape([1, 1, 1088, 128]),

TensorShape([192]),

TensorShape([1, 1, 2080, 192]),

TensorShape([3, 3, 48, 64]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 3, 32, 48]),

TensorShape([320]),

TensorShape([256]),

TensorShape([1088]),

TensorShape([1, 1, 1088, 192]),

TensorShape([192]),

TensorShape([1088]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 3, 192, 224]),

TensorShape([224]),

TensorShape([128]),

TensorShape([3, 3, 32, 32]),

TensorShape([96]),

TensorShape([1, 1, 320, 32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 1, 1088, 192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1, 1, 384, 1088]),

TensorShape([256]),

TensorShape([128]),

TensorShape([320]),

TensorShape([5, 5, 48, 64]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([48]),

TensorShape([3, 3, 48, 64]),

TensorShape([192]),

TensorShape([128]),

TensorShape([224]),

TensorShape([128]),

TensorShape([192]),

TensorShape([3, 3, 256, 384]),

TensorShape([1, 3, 192, 224]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1, 1, 192, 48]),

TensorShape([64]),

TensorShape([3, 3, 320, 384]),

TensorShape([1, 1, 1088, 128]),

TensorShape([192]),

TensorShape([1, 1, 320, 256]),

TensorShape([256]),

TensorShape([96]),

TensorShape([3, 3, 32, 32]),

TensorShape([32]),

TensorShape([192]),

TensorShape([1, 1, 384, 1088]),

TensorShape([160]),

TensorShape([192]),

TensorShape([1, 1, 448, 2080]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([1, 1, 320, 32]),

TensorShape([3, 3, 32, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([384]),

TensorShape([1, 1, 1088, 192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([3, 1, 224, 256]),

TensorShape([2080]),

TensorShape([1, 1, 2080, 1536]),

TensorShape([3, 3, 256, 256]),

TensorShape([32]),

TensorShape([32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1, 1, 1088, 192]),

TensorShape([192]),

TensorShape([1, 1, 1088, 128]),

TensorShape([160]),

TensorShape([288]),

TensorShape([192]),

TensorShape([1, 1, 1088, 192]),

TensorShape([128]),

TensorShape([1, 1, 320, 32]),

TensorShape([1, 1, 320, 32]),

TensorShape([1088]),

TensorShape([1, 7, 128, 160]),

TensorShape([2080]),

TensorShape([1, 1, 1088, 128]),

TensorShape([1088]),

TensorShape([1088]),

TensorShape([192]),

TensorShape([256]),

TensorShape([224]),

TensorShape([192]),

TensorShape([32]),

TensorShape([64]),

TensorShape([64]),

TensorShape([48]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([288]),

TensorShape([256]),

TensorShape([224]),

TensorShape([128]),

TensorShape([96]),

TensorShape([64]),

TensorShape([32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([64]),

TensorShape([64]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([384]),

TensorShape([192]),

TensorShape([128]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([96]),

TensorShape([64]),

TensorShape([64]),

TensorShape([32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([224]),

TensorShape([64]),

TensorShape([64]),

TensorShape([32]),

TensorShape([384]),

TensorShape([160]),

TensorShape([160]),

TensorShape([96]),

TensorShape([64]),

TensorShape([32]),

TensorShape([48]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([32]),

TensorShape([48]),

TensorShape([64]),

TensorShape([160]),

TensorShape([192]),

TensorShape([160]),

TensorShape([128]),

TensorShape([224]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([256]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([128]),

TensorShape([96]),

TensorShape([48]),

TensorShape([32]),

TensorShape([64]),

TensorShape([128]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([64]),

TensorShape([192]),

TensorShape([384]),

TensorShape([256]),

TensorShape([128]),

TensorShape([224]),

TensorShape([192]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([48]),

TensorShape([256]),

TensorShape([160]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([32]),

TensorShape([48]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([224]),

TensorShape([224]),

TensorShape([128]),

TensorShape([160]),

TensorShape([224]),

TensorShape([32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([128]),

TensorShape([32]),

TensorShape([256]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([128]),

TensorShape([160]),

TensorShape([192]),

TensorShape([160]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([256]),

TensorShape([192]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([128]),

TensorShape([128]),

TensorShape([256]),

TensorShape([192]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([160]),

TensorShape([64]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([224]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([128]),

TensorShape([384]),

TensorShape([48]),

TensorShape([32]),

TensorShape([48]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([256]),

TensorShape([288]),

TensorShape([128]),

TensorShape([192]),

TensorShape([32]),

TensorShape([160]),

TensorShape([192]),

TensorShape([224]),

TensorShape([256]),

TensorShape([192]),

TensorShape([128]),

TensorShape([32]),

TensorShape([32]),

TensorShape([192]),

TensorShape([224]),

TensorShape([128]),

TensorShape([192]),

TensorShape([128]),

TensorShape([224]),

TensorShape([192]),

TensorShape([48]),

TensorShape([192]),

TensorShape([160]),

TensorShape([256]),

TensorShape([192]),

TensorShape([128]),

TensorShape([32]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([160]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([224]),

TensorShape([256]),

TensorShape([256]),

TensorShape([288]),

TensorShape([192]),

TensorShape([1536]),

TensorShape([96]),

TensorShape([32]),

TensorShape([160]),

TensorShape([128]),

TensorShape([160]),

TensorShape([224]),

TensorShape([224]),

TensorShape([192]),

TensorShape([32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([128]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([64]),

TensorShape([128]),

TensorShape([192]),

TensorShape([256]),

TensorShape([32]),

TensorShape([64]),

TensorShape([128]),

TensorShape([256]),

TensorShape([192]),

TensorShape([224]),

TensorShape([160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([80]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([64]),

TensorShape([160]),

TensorShape([384]),

TensorShape([32]),

TensorShape([64]),

TensorShape([32]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([224]),

TensorShape([192]),

TensorShape([192]),

TensorShape([64]),

TensorShape([64]),

TensorShape([160]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([32]),

TensorShape([64]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([160]),

TensorShape([256]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([48]),

TensorShape([192]),

TensorShape([192]),

TensorShape([32]),

TensorShape([64]),

TensorShape([32]),

TensorShape([160]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([160]),

TensorShape([256]),

TensorShape([160]),

TensorShape([160]),

TensorShape([32]),

TensorShape([64]),

TensorShape([32]),

TensorShape([48]),

TensorShape([128]),

TensorShape([288]),

TensorShape([224]),

TensorShape([32]),

TensorShape([32]),

TensorShape([192]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([256]),

TensorShape([96]),

TensorShape([48]),

TensorShape([48]),

TensorShape([160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([192]),

TensorShape([256]),

TensorShape([192]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([192]),

TensorShape([320]),

TensorShape([224]),

TensorShape([32]),

TensorShape([32]),

TensorShape([160]),

TensorShape([192]),

TensorShape([32]),

TensorShape([160]),

TensorShape([160]),

TensorShape([192]),

TensorShape([224]),

TensorShape([80]),

TensorShape([48]),

TensorShape([48]),

TensorShape([32]),

TensorShape([128]),

TensorShape([192]),

TensorShape([160]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([192]),

TensorShape([256]),

TensorShape([32]),

TensorShape([160]),

TensorShape([128]),

TensorShape([1536]),

TensorShape([48]),

TensorShape([32]),

TensorShape([32]),

TensorShape([384]),

TensorShape([192]),

TensorShape([192]),

TensorShape([320]),

TensorShape([192]),

TensorShape([192]),

TensorShape([128]),

TensorShape([128]),

TensorShape([192]),

TensorShape([256]),

TensorShape([32]),

TensorShape([64]),

TensorShape([32]),

TensorShape([32]),

TensorShape([32]),

TensorShape([192]),

TensorShape([192]),

TensorShape([160]),

TensorShape([160]),

TensorShape([192]),

TensorShape([128]),

TensorShape([256])]preds = m(rimg.reshape(-1,*rimg.shape).astype(np.float32)).numpy()[0]

preds = np.exp(preds)/np.sum(np.exp(preds))

np.sum(preds)np.float32(1.0000001)names = classnames.copy()

names["preds"] = preds

names = names.sort_values(by="preds", ascending=False)

names.head()plt.figure(figsize=(15,3))

n = 40

plt.bar(range(n), names[:n].preds.values)

plt.xticks(range(n), names[:n].label.values, rotation="vertical");

plt.title("classes with highest prediction probability")

plt.grid();

One stage detectors¶

This blog: YOLO v3 theory explained contains a detailed explanation on how YOLOv3 builds a prediction for detections.

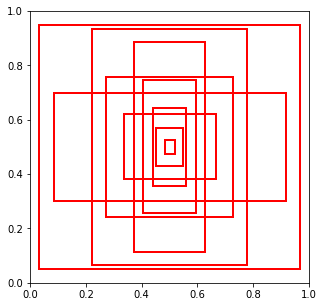

Region priors¶

A set of box shapes representative of what appears in the training dataset. Obtained typically with KMeans, one must decide how many. For instance

Image("local/imgs/anchor_boxes.png", width=300)

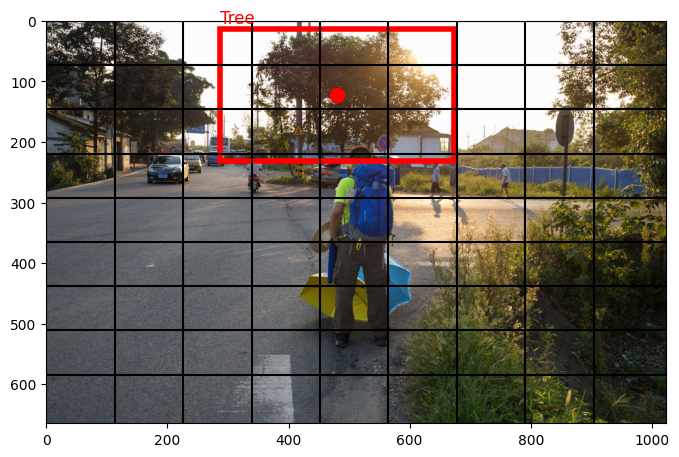

Image fixed partition

each fixed size image cell is responsible for predicting objects whose center falls within that cell.

for instance the red dot below signals the tree center and thus the cell responsible for its prediction.

fig = plt.figure(figsize=(8,8))

ax = plt.subplot(111)

plt.imshow(img)

n = 9

for i in range(n):

plt.axvline(img.shape[1]//n*i, color="black")

plt.axhline(img.shape[0]//n*i, color="black")

k = boxes.iloc[0]

label = c.loc[k.LabelName].values[0]

ax.add_patch(Rectangle((k.XMin*w,k.YMin*h),(k.XMax-k.XMin)*w,(k.YMax-k.YMin)*h,

linewidth=4,edgecolor='r',facecolor='none'))

plt.text(k.XMin*w, k.YMin*h-10, label, fontsize=12, color="red")

plt.scatter(k.XMin*w+(k.XMax-k.XMin)*w*.5,k.YMin*h+(k.YMax-k.YMin)*h*.5, color="red", s=100)

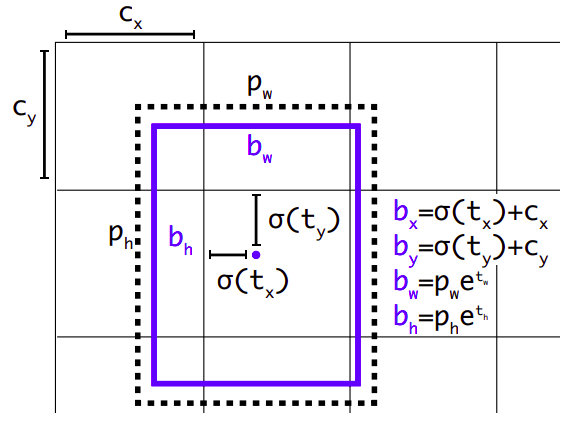

Predictions¶

for each cell and each anchor box the model will make a prediction that will contain:

, : the offset of the object center to the cell’s top left corner.

: : the widths of the object bounding box as referred to the anchor box size.

: a proxy for the probability of an object’s center being present at that cell:

: a vector of class probabilities

observe that:

, are the dimensions of the anchor box.

the sigmoid function is used to bound offset coordinates.

the exponential function is used to bound sizes and provide larger gradients when

we are interested in both probability and IOU of the bounding box.

Therefore for each cell and anchor box we have predictions, being the number of classes in our dataset.

Image taken from YOLO9000: Better, Faster, Stronger

Image("local/imgs/yolo_predictions.png")

Typically, CNNs will

downsample image dimensions to , or the number of cells defined, and will do a convolution in 2D with channels, being the number of anchor boxes.

make the same process in previous CNN layers (for instance when the activation map is ) or larger. So there is a set of prediction boxes

This will be ok to predict large object, but small ones get lost in CNN downsampling. To overcome this, different architectures use different techniques:

YOLO3 make predictions in earlier CNN layers besides the last one.

RetinaNet downsamples the image and then unsamples cativation maps, to integrate (sort of skipped connections) high level semantic information of late layers with spatial information from earlier layers.

See this blog for further intuitions on this

Loss function¶

Observe that we are doing BOTH regression (for boxes) AND classification (for object classes). A specific loss function must then be devised to take this into account.

See this blog post and this blog post for detailed explanations.

Non maximum suppression¶

Finally, as there might be many box predictions at different cells and resolutions, a decision must be taken for overlapping predictions. This is Non maximum suppression, and you can check this blog for a detailed explanation.